SAP solution manager licenses have been renewed last few years by SAP.

Questions that will be answered in this blog are:

- Do I need a user license for solution manager users?

- If I run solution manager on HANA, do I need to pay HANA database licenses?

- How can I get Focused Build and Focused Insights?

- What about Focused Run licenses?

User licenses for SAP solution manager

Since 1.1.2018 the requirement of having named users was dropped by SAP.

HANA database licenses

If you want to run HANA database below SAP solution manager as database, you need to procure the infrastructure. The HANA database rights are included in SAP solution manager. This is the only exception SAP has. For all other use case you need to pay for HANA as database as well.

Using SAP solution manager for non-SAP components

You can use SAP solution manager to manage non-SAP components as well. Especially the ITSM service desk component can be used for this. When you use this function for non-SAP components, you will need SAP enterprise support rights for SAP solution manager in stead of the SAP standard support.

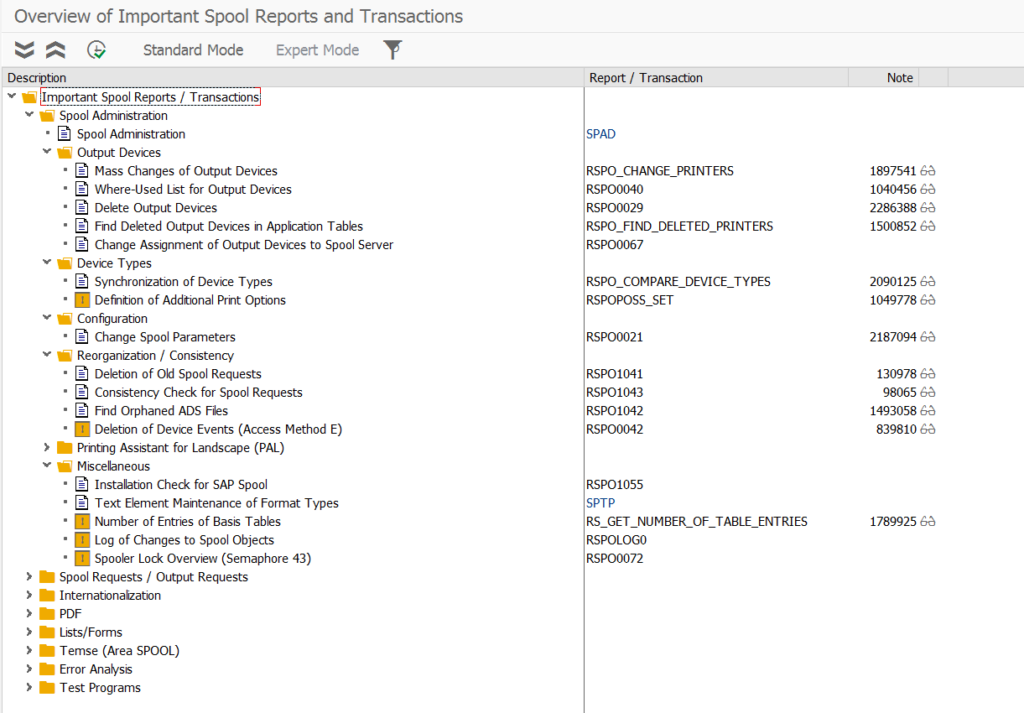

Focused Insights and Focused Build

SAP offers Focused Insights and Focused Build as extra options on top of SAP solution manager. Both are installed as add-on. Focused Insights brings extra dashboard building capabilities. With Focused Build you can get an extra grip on your solution build process.

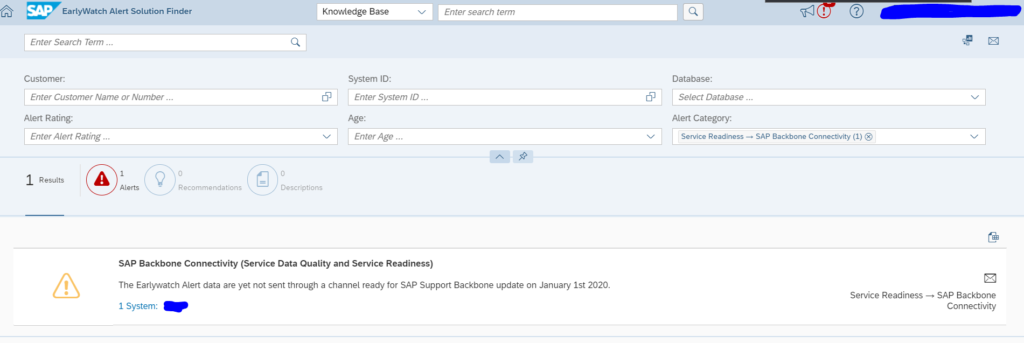

As of 01.01.2020 both solutions are part of standard maintenance contract. See also OSS note 2361567 – ST-OST Usage Rights and Support.

If you want to try out these solutions, you can use the available free SAP demo system. Read more about this in the following blog.

SAP Focused run now also covers the functionality of Focused Insights, but in a far superior and more performing way. Read more in this blog.

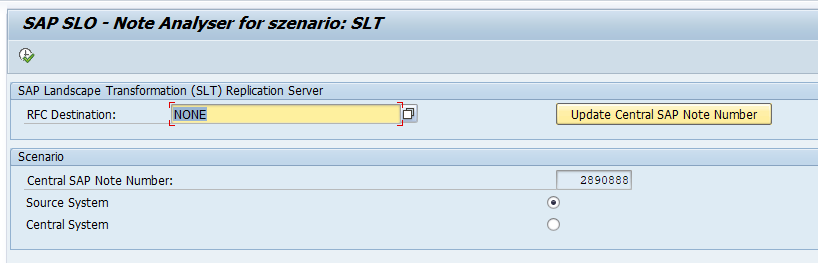

Focused Run

Focused Run is separate solution with separate license to optimize the running of large SAP landscapes. Focused run does NOT run on SAP solution manager. It runs on a separate environment and only runs on SAP HANA. You cannot combine a Focused Run and SAP solution manager on one single installation. More information on Focused Run can be found on the SAP site. And on the specialized SAP Focused Run Guru site.

For licenses of Focused Run, read this dedicated blog.

Despite the fact that Focused Run is a paid solution, it offers by far the most sophisticated and added value product.

More background information

More information can be found on the SAP solution manager usage rights website.